Introduction

This happened when the product I had been building was finally in decent shape and I started preparing a landing page before launch. I began using AI to help me articulate the concept more clearly, and the explanation quickly became more polished.

But the more polished it became, the stronger a certain discomfort grew. It was logically sound. It even seemed to fit a real need. And yet it no longer felt quite like the thing I had actually wanted to build.

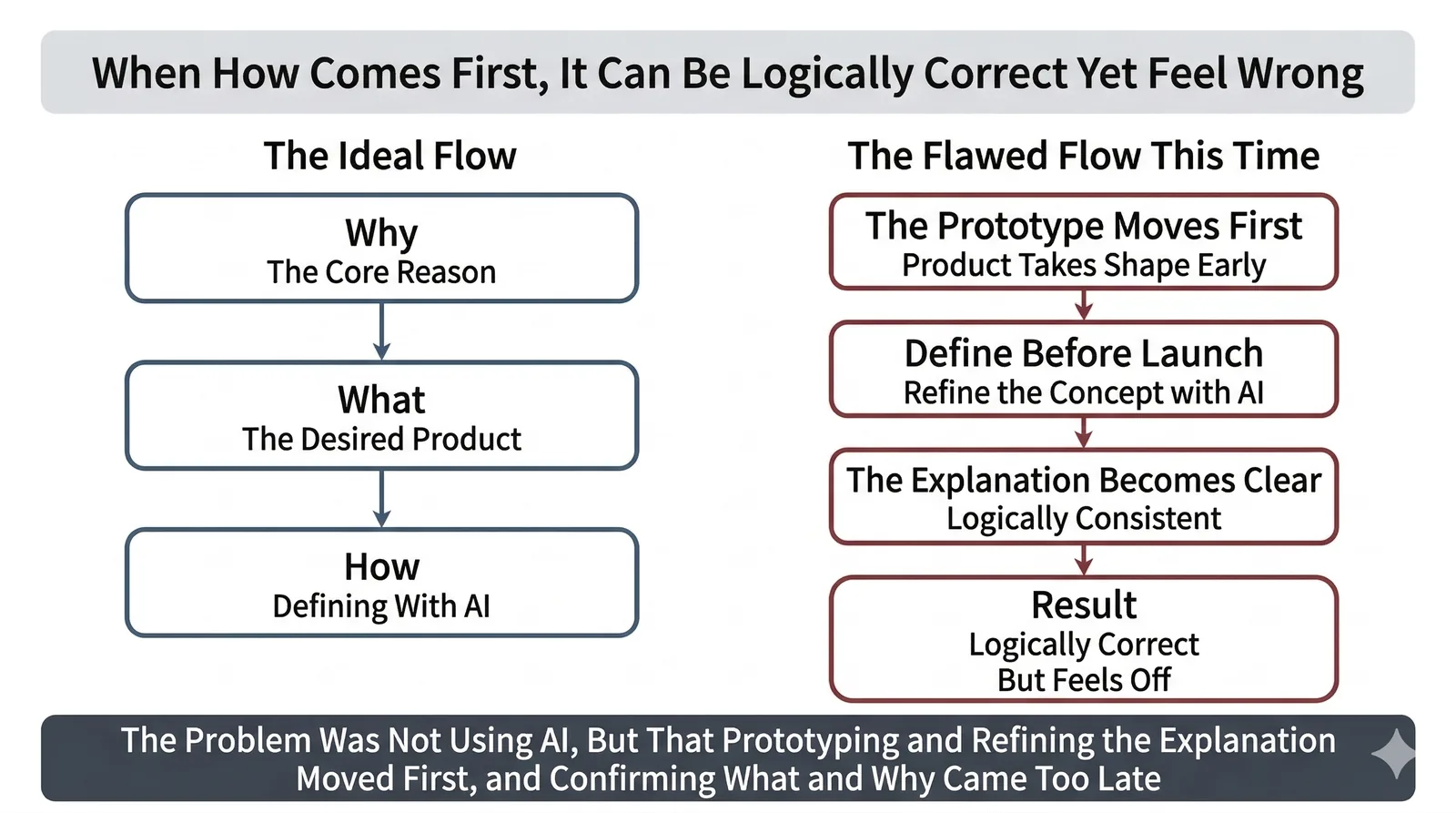

Looking back, the problem was not that I used AI for the discussion itself. The real issue was that I let prototyping and explanation move forward while the confirmation of my Why and What stayed in the background.

In this article, I want to use that failure to think through what humans should still hold onto in the age of AI, and what we can safely delegate.

What This Article Covers

- Why it is easy to lose sight of what you actually want to build while using AI as a thought partner

- What people should keep in their own hands, and what they can delegate to AI

- How to think about

Why,What, andHowin a way that makes it harder to drift

1. While talking with AI, what I wanted to build slowly drifted

1-1. Right before launch, I started thinking seriously about the concept

By that point, the product itself was already somewhat real. The functionality was working, and the next thing I needed was a clearer answer to a different question: what was this product actually for, and how should I explain it to other people?

So I started using AI to help me articulate the concept for the landing page. In my head, this felt like a natural extension of design and implementation. I assumed the explanation would simply fall into place afterward.

And to be fair, AI is very good at this kind of work. Even vague thoughts start sounding coherent. It can turn rough ideas into something structured, with plausible target users and a clean value proposition. At that stage, it genuinely felt like everything was moving in the right direction.

1-2. It was logically correct, but it still felt wrong

The concept that came out of those conversations was not nonsense. As an explanation, it worked. There was a person with a problem, and the product would provide value to that person. The story was easy to follow and easy to communicate.

But the more refined it became, the more uncomfortable I felt. Before I noticed, the product was being framed more like a support service that stays close to lonely founders.

That framing was not obviously wrong. It was logically consistent, and I can easily imagine that there is real demand for something like that. But it was not where my excitement lived.

So that was the strange part: the explanation was correct, but it was no longer quite the thing I wanted to build. I only saw that clearly when I was already at the stage of preparing to publish it.

1-3. What I really wanted was a partner that helps people move their challenges forward

What I actually wanted to build was something that would let more people have a partner that helps move their challenges forward.

More concretely, I wanted to increase the number of situations where people could tackle things they had wanted to try, but had struggled to move forward on alone. Someone you can casually ask for advice, rely on when you get stuck, and sometimes even build with. I wanted that kind of partner to be available not just to a few exceptional people, but to anyone.

The clean way to describe it is “a partner that helps you move a challenge forward.” But emotionally, it felt even simpler than that. AI felt like a weapon for taking on the world. I was much more drawn to the feeling of moving something forward than to the idea of gently accompanying someone through their struggles.

That is why the more the framing converged toward a support service that stays close to you, the more the discomfort grew. At that point I finally realized that what I needed to revisit was not the quality of the explanation, but the underlying Why and What.

2. The cause was not AI alone

I do not think this happened because AI arbitrarily changed the direction. I think AI's logical cleanup landed on top of a situation where, on my side, the means had already started to run ahead of the purpose.

2-1. The prototype moved first, and the Why and What were left for later

By the time I reached the pre-launch stage, the prototype was already fairly far along. Because the implementation had taken shape first, I assumed the concept would also be easy to organize afterward.

But having a prototype is not the same thing as having clarity about why you want to build it and what you want to build. In fact, because the product already existed in some form, I started treating Why and What as things I could simply tidy up as explanation before launch.

Once you are in that mode, it becomes very easy to get pulled into the question of “how should I explain what already exists?” The more natural order would have been to keep checking Why and What while prototyping and implementing. In my case, that order had been reversed. That was the first real drift.

2-2. Making things with AI was simply too exciting

Another big part of it was that making things with AI was just a lot of fun. At that time I was so absorbed in building with AI that I spent an entire three-day holiday basically living inside Claude Code.

Before this, building my own product on the side while working full time would have felt extremely hard. I could implement things, but I did not have enough time. With AI, though, even a little bit of free time on a weekend starts turning into something real. Watching something working emerge piece by piece was genuinely exciting.

And because that excitement was so strong, building itself started drifting toward becoming the goal. I should have been asking what I was building for, but the fact that I could build it had become a much stronger force.

2-3. AI can move forward logically even when the purpose is still vague

What makes AI tricky is that it can produce something that sounds convincing even when the purpose is still vague.

Even if Why and What are not fully articulated, AI can generate plausible target users and a plausible value proposition. The language is polished. The logic makes sense. So even if the direction is drifting away from your real center, that drift is hard to notice at first glance.

That is exactly what happened here. The concept AI helped produce was not “wrong.” If anything, it was a very good explanation. But that still did not mean it was mine.

AI can move forward even when the purpose is fuzzy. That is why, if you use it without holding onto the things you need to hold onto, you can end up somewhere that is logically correct and still different from what you actually wanted to build.

3. What people should hold onto in the age of AI, and what they can delegate

This failure made one thing much clearer for me: I need to think about Why, What, and How as different layers. As AI gets stronger, people do not need to keep everything in their own hands anymore. But that makes it even more important to know what should stay in your hands, and what should not be postponed.

3-1. What humans should hold onto is the Why and the What

The thing you should not delegate to AI is not the work itself. It is the core of the purpose and the value judgment behind the work.

Here, Why means why you want to build it and what values or underlying concerns sit underneath it. What means what you want to build and what kind of state you want to create.

That is where my own thinking was still underdefined. What did I want to make? Why did I want to put this product into the world? Before those questions were sufficiently articulated, the explanation alone became polished first.

The stronger AI becomes, the more humans need to hold onto Why and What. Otherwise, we start getting pulled by answers that are clean and coherent, but not fully ours.

3-2. You can delegate a lot of How to AI

That does not mean the conclusion is “so do not rely on AI.” If anything, once your Why and What are visible, I think you should delegate a lot of How to AI quite aggressively.

Implementation approaches, option generation, brainstorming, structure, first drafts of writing. AI is extremely strong in this layer of How. In my case too, the only reason building itself moved this far was because AI was there. I do not want to deny that value at all.

If anything, AI becomes more useful the clearer your Why and What are. Once the destination is visible, the path forward can often be explored much faster and more broadly with AI than by trying to carry everything alone.

3-3. To avoid getting pulled off course, do not leave Why and What unattended

What matters here is not that Why and What must be perfectly defined from the beginning. I actually think there is real value in clarifying them through dialogue, little by little, just as I did in this conversation.

What matters is keeping Why and What consciously in scope as things you are still exploring. They do not need to be finished. But you should not let prototyping and explanation move ahead while those questions are effectively unattended.

When you use AI as a thought partner, it helps to keep returning to questions like these:

- Why do I want to build it?

- What am I actually trying to build?

- Is the explanation getting cleaner in a way that still points toward what I really want?

What keeps you from getting pulled off course is not distance from AI. It is continuing to check your Why and What while moving forward together.

Conclusion

What this failure made me realize is that the thing we should not delegate to AI is not the work itself, but the core of our purpose and value judgment.

Why and What do not need to be complete from the start, and they can absolutely become clearer through dialogue with AI. But if their confirmation keeps getting postponed while prototypes and explanations move ahead, it becomes much easier to lose sight of what you actually wanted to build.

In the age of powerful AI, the goal is not to keep AI at a distance. The goal is to move forward with it while staying conscious of what still needs to remain in your own hands. That is what this failure taught me.