Introduction

It is not unusual to ask AI to do something for work and get back an output that is not quite what you wanted.

When that happens, it is tempting to think, "Maybe I wrote the prompt poorly." But in many cases, the issue starts earlier than wording. The AI simply does not have enough information to do the job properly.

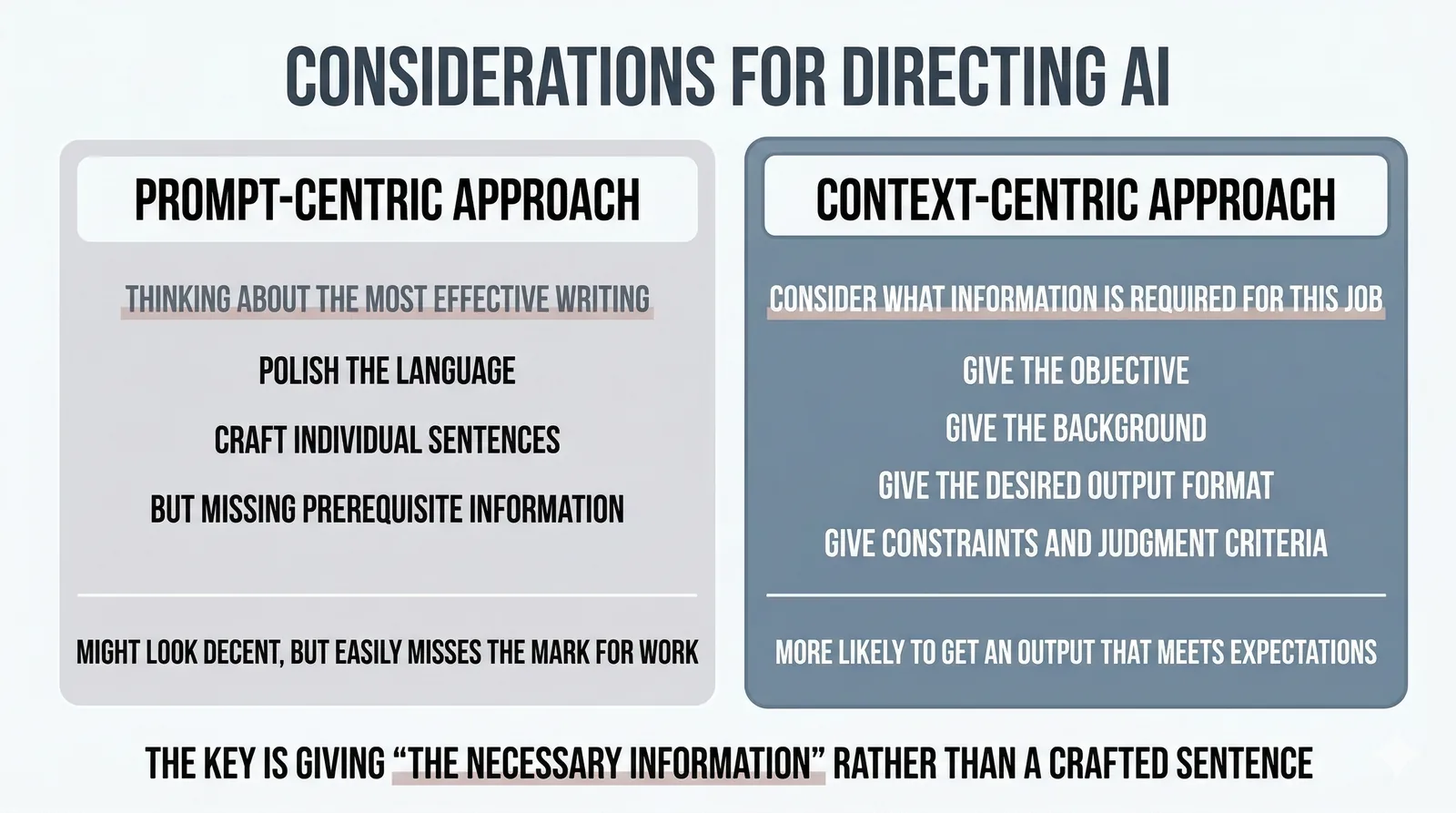

In this article, I want to explain why, when you start using AI for work, what matters is often not fine-tuning the phrasing of your prompt, but thinking through what information the AI actually needs. People sometimes describe this way of thinking as context engineering, but here I want to treat it as a practical working habit rather than a difficult concept.

What This Article Covers

- Why AI outputs can miss the mark even when the prompt sounds reasonable

- Why context often matters more than prompt wording

- How to understand

context engineeringin a practical way for everyday work

1. Why AI work can miss the mark

You can ask AI to do a task and still get something that feels slightly off. The content may be shallow, the direction may be wrong, or the result may simply not match what you were actually trying to get done. When that happens, it is easy to assume that the answer is to write a smarter prompt.

In my experience, though, what you often need to revisit is not the wording but the context. AI can only reason from the information it has been given. If the purpose, background, and definition of a good result are still vague, the output may look polished while still being wrong for the job.

2. What happens when context is missing

Take meeting notes as an example. Suppose you only give AI a transcript and ask it to "summarize this into meeting notes." It will probably produce some kind of summary. But if it does not know the purpose of the meeting, the roles of the participants, or which points matter most, the result can easily become a cleaned-up list of statements rather than a useful record of decisions and next actions.

Slide writing is similar. If you say, "Create slides based on this," AI can generate something that looks like a plausible deck. But if it does not know who the audience is, whether the deck is for decision-making or simple sharing, and what the main takeaway should be, the result may look neat while still being hard to use in practice. The problem is usually not that AI cannot make slides. It is that the job was missing the context needed to shape them well.

In both examples, the real issue is often not a lack of model capability but a lack of working context. AI can help with wording and structure, but it cannot easily infer what matters most in a task unless that information is actually provided.

3. What to think about before the prompt

What matters here is not trying to come up with a clever sentence. It is thinking first about what information the task actually requires. Before asking AI to help, it makes a big difference whether you have taken the time to organize what the task depends on in the first place.

For example, you may need to think about:

- what the task is for

- what background the AI should know

- what form the output should take

- what constraints exist

- what counts as a good result

You do not need to articulate all of this perfectly. But it helps a lot if you at least provide a foundation that tells the AI how to understand the work.

4. This is also what people mean by context engineering

Recently, people have started using the term context engineering for this kind of thinking. The phrase can sound technical, but the idea here is simple: give AI the information it needs in order to do the work well. At least in my own practice, that is the part that matters first.

That does not mean prompt writing is irrelevant. For small and simple tasks, wording and instruction style can absolutely help. But in real work, if the underlying context is missing, polishing the phrasing alone will not solve the problem. It is a question of priority.

5. You do not need to provide all context up front

That said, this does not mean you need to write a giant perfect prompt from the start. If you frame it that way, the idea of context quickly becomes another burden.

In practice, it is often enough to provide the foundation first: the purpose of the task, what kind of output you want, and any especially important constraints. Then, as you see the AI's response, you can add what was missing. You may realize that you should have explained more background, or that your evaluation criteria needed to be clearer. Context does not always have to be injected all at once. In many cases, it is something you refine through the conversation.

6. What information AI usually needs for work

When you ask AI to help with work, it is often useful to think in terms of a few basic questions: What is this task for? Who is the output for? What form should it take? What constraints matter? What would make the result good enough to use? You do not have to treat these as a strict checklist, but when these perspectives are missing, AI tends to produce outputs that sound reasonable without actually fitting the task.

The same applies to engineering work. If you ask AI to "implement this feature" without sharing the design intent, the assumptions in the existing codebase, the constraints, or the acceptance criteria, you may still get working code, but it can easily miss the point. Whether the task is meeting notes, slide writing, or implementation, the core issue is often the same: has the AI been given the context it needs to do the work well?

The idea of context discussed in this article is also one part of the larger structure of an AI agent. If you want to see how context fits together with models, harnesses, and tools, How AI Agents Work: Models, Harnesses, Context, and Tools may also be useful.

Conclusion

When you ask AI to do work, what matters most is often not crafting a better prompt, but providing the context the task actually needs. If the purpose and background are missing, the AI may still produce something polished, but that does not mean it will be useful for the job.

And that context does not need to be perfect from the beginning. Start with the foundation, then refine it through the conversation as you notice what is missing. If AI is not doing what you expected, it may be worth looking at the missing context before spending more time polishing the prompt itself.