Introduction

When you are asked to lead AI adoption inside an organization, it is surprisingly hard to tell where to start.

Should you begin with training? Should you distribute tools first? Should you collect and share use cases? I was thinking along those lines at first as well.

But after working through this in an organization of about 70 people, I came away with a different impression. What mattered more than the training itself was how the whole rollout was designed around it. In other words, the bigger question was not just how to teach AI, but how to create the conditions in which AI actually gets used.

What seemed especially important was not starting with training right away. Instead, I first spent time sharing why AI mattered, and only then made the environment available for people to try.

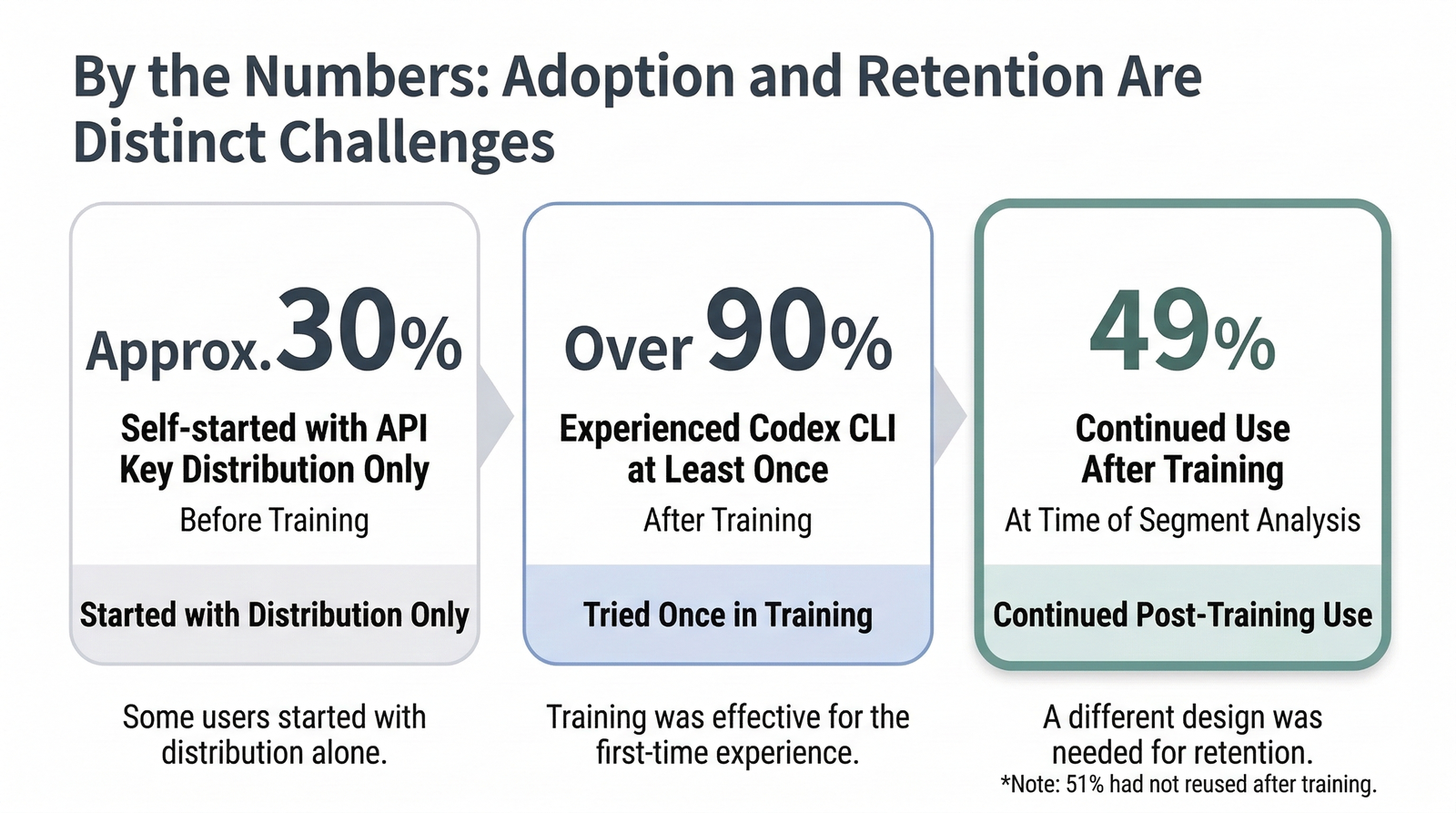

As a result, even before training, simply distributing API keys led about 30% of the group to start exploring on their own. At the same time, while training helped more than 90% of participants try Codex CLI at least once, only 49% continued using it afterward. That made it clear that getting people to try something once and getting them to keep using it are different problems.

In this post, I want to look back on how I actually approached this rollout and share what felt most important in practice. Every organization is different, so I do not think this can be turned into a universal template. But I hope it can still be useful as a way of thinking about the conditions that make AI adoption work.

What This Article Covers

- How to think more clearly about where to start when you are asked to drive AI adoption

- The actual rollout flow I used in an organization of about 70 people

- What seemed to matter most in hindsight, and why training alone was not enough

1. What Makes AI Adoption Hard to Start

One reason internal AI adoption is hard is that many possible actions all seem important at the same time. Training matters. Tool access matters. Sharing use cases matters. But that also makes it hard to see which one should come first.

In practice, it is very easy to default to training. It is visible, easy to explain, and it makes it feel like the organization is moving. But looking back, I do not think training alone is enough to create ongoing, self-directed usage.

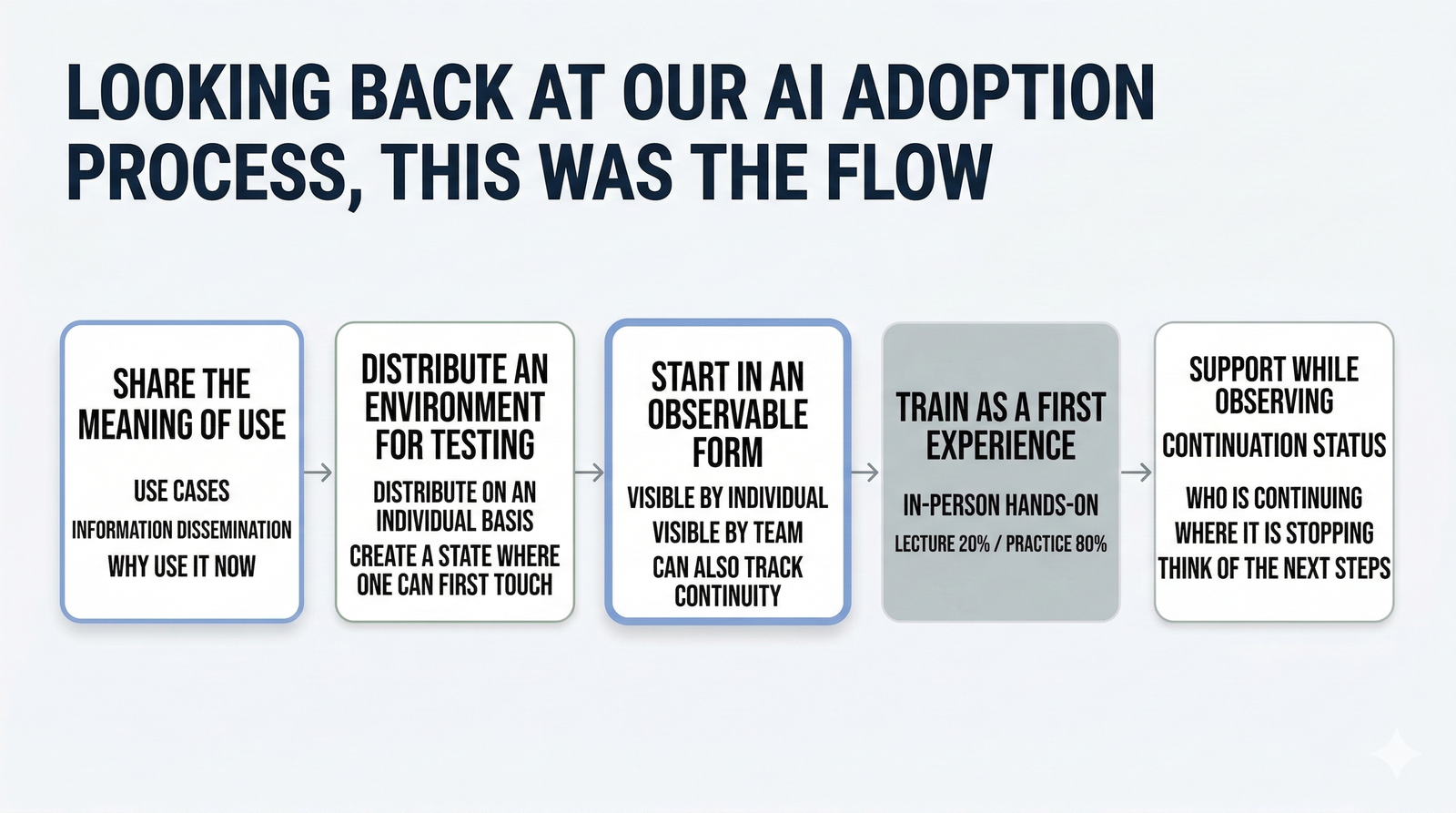

My current view is that AI adoption becomes easier to reason about when you see it as a flow rather than a one-off initiative:

- First, share why people should use it

- Then make it easy to try

- Start in a way that makes usage observable

- Use training as a first hands-on experience

- Then look at who continues using it

Looking back, this flow mattered more than the question of whether training should happen or not.

This is roughly what that flow looked like in practice.

At least in my case, the important thing was not whether to run training, but how to launch the rollout, how to observe what happened, and how to keep it going afterward.

2. How I Actually Rolled It Out

2-1. I spent a month sharing why AI mattered before training

The first thing I did was not training. It was sharing why AI was worth using in the first place. From January to early February 2026, for about a month, I gave weekly internal presentations on how I was using AI in my own work, along with ongoing written updates.

I tried not to frame this as a stream of “useful new features.” Alongside concrete examples, I also talked about broader trends and ideas such as general-purpose agents, harnesses, and context. I wanted to make the case that this was not just about coding. AI was likely going to become part of everyday work more broadly.

Looking back, what I was really doing in that period was not just explaining AI. I was trying to align on why it mattered now. Before asking people to use it, I was trying to create a shared sense of why it was worth trying at all.

2-2. Then I distributed access in a way that could be measured later

The next step was distribution. Before the training sessions, I gave people API keys on an individual basis.

I did that because I wanted usage to be observable later. If everyone had shared the same API key, I would not have been able to tell who was actually using it. That would have made it much harder to see adoption at the individual, team, or organizational level, and much harder to evaluate retention accurately.

To me, the point of an adoption effort is not just to start it, but to improve it after it starts. That means you need to begin in a way that can be observed.

At that stage, simply distributing API keys was enough for about 30% of people to start using the tools on their own. I cannot say distribution alone caused that, but it was clear that once people had a usable environment, some of them were willing to explore without waiting for formal training.

2-3. Training was designed as a first hands-on experience

I designed the training sessions less as lectures and more as a first practical experience.

There was no single day when all 70 people could gather at once, so I ran the same content across multiple sessions. I also chose in-person sessions as the default because I thought real-time support would be difficult online. In practice, people get stuck in different places during initial setup, so it was much easier to support them face to face.

The sessions were roughly 20% lecture and 80% hands-on practice. Using the tool itself is not especially difficult in principle. At a basic level, you are just typing natural-language instructions. The bigger friction tends to be setup and that first moment of not knowing how to start. That is why I felt it mattered more to give people time to actually use it than to explain everything in detail.

The initial goal was not to make people highly proficient right away. It was simply to help them get the feel of manipulating local files through natural language, and to leave the session thinking, “This is not as hard as I expected” or “I could probably use this myself.”

In this rollout, I used Codex CLI for the hands-on sessions. But the important part was not the specific tool. It was creating one real moment where people could make AI do something with their own hands.

2-4. After training, I looked at continued usage

As an entry point, the training worked fairly well. More than 90% of participants tried Codex CLI at least once.

But when I later looked at usage segments, 51% had not used it again after training, while 49% had continued using it. There are more detailed segments underneath that, but even at a high level the message was clear: getting people to try it once and getting them to keep using it are not the same thing.

When you line the numbers up, it becomes easier to see that initial access and continued use are separate layers of the problem.

That was the moment when I felt most clearly that training can work as an entry point, but not as the whole adoption strategy. There still needs to be another layer of design between a first hands-on experience and sustained use in real work.

3. What Seemed to Matter Most in Hindsight

3-1. The pre-training awareness phase mattered the most

Looking back, the part that seems to have mattered most was the month I spent sharing why AI mattered before any formal training happened.

That period was not just about presenting examples. I was also trying to share a broader sense of direction: how I was using AI myself, what trends seemed important, and why this was not only a coding topic but something likely to affect everyday work more broadly.

In other words, I was trying to align not only on what AI could do, but on why it was worth touching now.

My sense is that this is one of the main reasons around 30% of the group started exploring as soon as I distributed access. I cannot prove strict causality, but I do think the order mattered a lot. Sharing the meaning first, then making it easy to try, felt much more effective than doing those steps in reverse.

At least in my experience, it is more important than it may first appear to create that shared “why” before you ask people to start using AI.

3-2. It also mattered to start in a way that could be observed

Another important lesson was that it was not enough to simply distribute access. It also mattered that I started in a way that made later observation possible.

If you cannot see what is happening after the rollout begins, it becomes much harder to decide what to do next. You cannot tell who is starting to use it, which teams are picking it up, or where people are getting stuck. And if you cannot see those things, it is hard to decide whether you need more training, more support, or more examples.

That was one reason I distributed individual API keys. It adds some overhead at the start, but it gives you a much better foundation for retention analysis and segmentation later. Looking back, this idea of “start in a way you can observe later” felt more important than I expected.

3-3. Training works for first contact, but retention needs a separate design

Training was effective as a way to create an initial hands-on experience. More than 90% of participants tried the tool at least once, so in that sense it clearly did its job.

But continued use was a different matter. A person can leave a workshop having touched the tool once and still never use it again in their real work. To get beyond that, you need something else: a clearer connection to actual tasks, a place to ask questions, more examples, or some other kind of follow-up support.

That is why I think training becomes a weak stopping point if you treat it as the goal. Training matters, but its role is closer to opening the door than to finishing the adoption effort.

3-4. In the end, this was not an event but an operating model

Putting it all together, internal AI adoption did not feel like a one-time event. It felt like an operating model.

First, share why it matters. Then create an environment people can try. Make sure the rollout is observable from the beginning. Use training as a first hands-on experience. After that, look at who continues using it and where additional support is needed.

Seen that way, the success or failure of the effort was never really about the quality of the training session alone. It was about the surrounding operating design.

4. So Where Should You Start If You Are Asked to Drive AI Adoption?

Based on this experience, I do not think the first move should be to plan training immediately. A more useful order is something like this.

4-1. Start by sharing why it matters

Before teaching people how to use AI, it helps to align on why they should care now.

What kinds of work could it actually help with? What would get easier? Why is this something worth trying now rather than later? If those questions stay fuzzy, then even good training or easy access can still feel irrelevant to many people.

4-2. Then make it easy to try

Once the “why” is there, the next step is to create an easy entry point.

In my experience, it works better to let people try early than to wait until every explanation is complete. In my case, once access was available, some people started exploring on their own even before training happened.

4-3. Start in a way that can be observed later

This part also matters more than it may seem at first.

Who is using it? Which teams are picking it up? Who continues after the first experience? If you cannot answer those questions later, it becomes much harder to improve the rollout. That is why I think it is worth putting at least a little effort into making the rollout observable from the start.

4-4. Use training after that, not before everything else

Training is still important. I just do not think it should be treated as the very first move.

And when you do run it, it helps to think of it less as a lecture and more as a designed first experience. What matters is not whether participants hear every explanation, but whether they leave thinking, “I used it once, and I can probably do this again.”

4-5. Finally, look at continued usage

In adoption efforts like this, it matters not only how many people touched the tool once, but how many people are still using it afterward.

Who stops early? Who keeps going? Which teams look different from others? Once you can see that, your next actions become much easier to choose.

Conclusion

Looking back, I do not think internal AI adoption starts with training. It starts with creating the conditions in which people are willing and able to use it.

That means first sharing why it matters, then making it easy to try, and beginning in a way that can be observed and improved later. Training still plays an important role, but as part of that broader flow rather than as the whole story.

In my case, spending about a month sharing why AI mattered before distributing access seemed to make a real difference. Even before training, that was enough for around 30% of people to start exploring on their own. At the same time, training created first contact, but not retention on its own.

Every organization will have different constraints, so I do not think there is a single correct template here. Still, I hope this can be useful as one practical way to think about what needs to be in place before AI adoption really starts to stick.

This article focuses on how to launch AI adoption at the organizational level. If you want a complementary view on how to rethink individual business workflows, where to keep things deterministic, and where AI actually belongs, see The First Step in AI-Driven Process Improvement Is to Break Down the Workflow.